The Bug AI Cannot See: How Keeping Humans In The Loop Saves Real Money

🚨 Long Post Alert.

This is longer than my usual fare. Grab a coffee, settle in, and let’s dive deep.

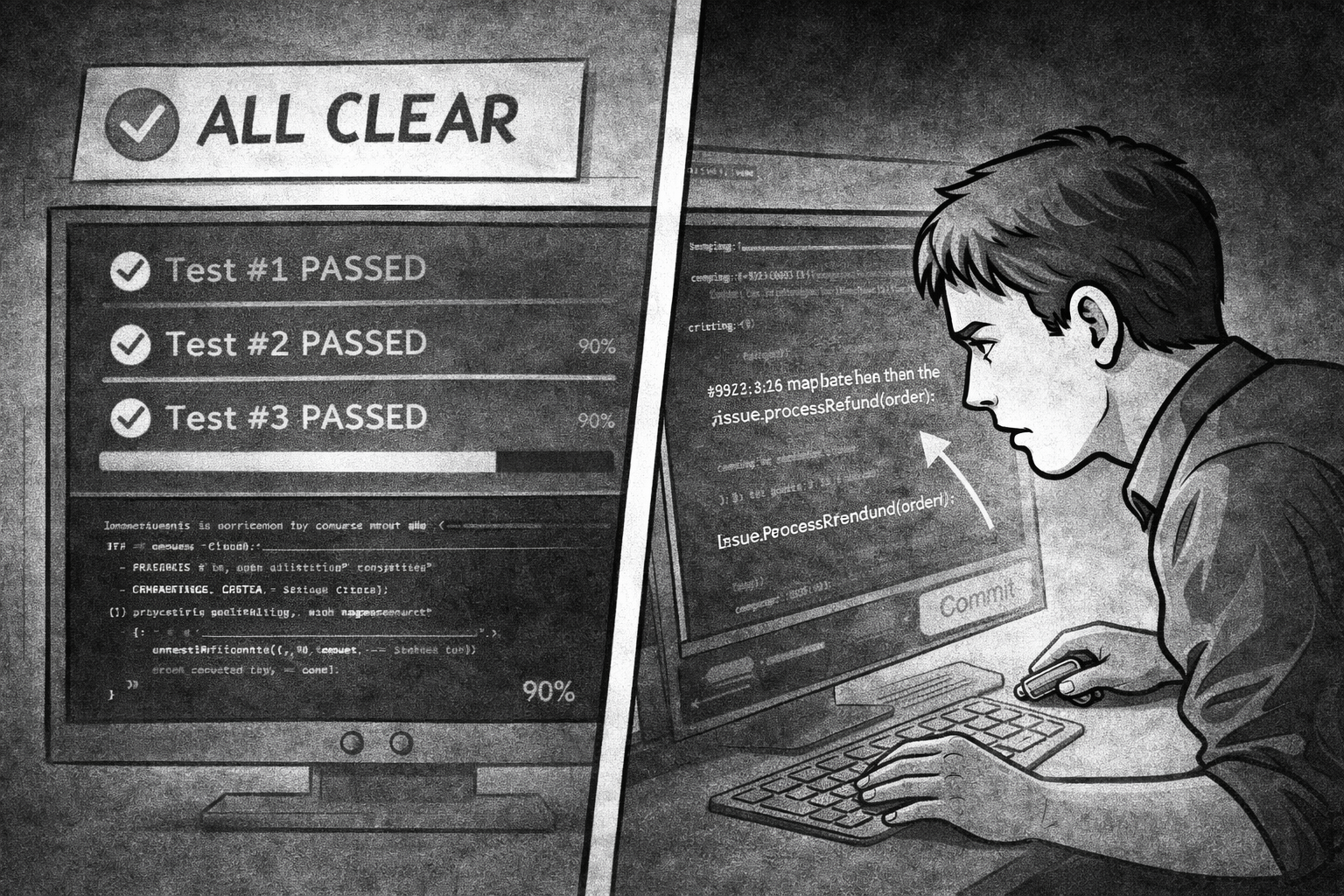

It happened silently. No errors. Tests passing. Pipeline green. I was reviewing AI-generated code before shipping it—a habit I keep—when I caught it: code duplication and, of course, a missing function call that would have silently corrupted user wallets in production.

My first instinct was to add the missing line and write a test for it. Thirty seconds and done. But then I stopped and asked myself a harder question: why did this happen in the first place?

The Setup That Should Have Been Perfect

I’m building ClickNBack—a financial platform where correctness is the whole point. A purchase can be confirmed in two ways: automatically by a background job, or manually by an admin. Both should do exactly the same thing.

I asked Claude to implement the manual admin version. The conditions were as good as they get:

- Functional specs documenting every step

- API contracts fully defined

- A perfect reference implementation already in the codebase (the background job)

- 500+ tests passing with 90% coverage

- Full type hints and explicit interfaces

The agent implemented it. Tests passed. Coverage held. Everything looked correct.

Except it called wallets_client.confirm_pending() and repository.update_status(), but forgot to call cashback_client.confirm().

That one missing call leaves the cashback transaction record in a pending state while the wallet balance moves to available. Two systems desynchronize—silently, permanently, at scale.

What Silent Corruption Actually Costs

If this shipped to production, one corrupted transaction would become thousands. Weeks pass. Hundreds of thousands of records where the wallet says “available” but the transaction log says “pending.” One day someone notices the audit trail doesn’t reconcile with the wallet state. But by then the database is fundamentally corrupted.

You can’t rebuild user balances from a log that contradicts itself. You rebuild them manually. Transaction by transaction. And every customer affected learns that your system can’t be trusted with their money—not because you made an honest mistake, but because your checks failed to catch it.

That’s not a bug that costs money to fix. It costs trust to rebuild. And trust, once broken publicly, never fully recovers. You become the platform where the balance didn’t match, on every tech forum that discusses what went wrong.

Why an Extra Test Is the Wrong Fix

My first instinct—add the missing line and the corresponding test—is how most engineers would respond. It’s how I responded, initially. But a test for cashback_client.confirm.assert_called_once() is a patch, not a fix. It closes one gap while leaving the underlying problem intact.

The real problem is not that the agent forgot a line. It’s that two separate functions exist that implement the same operation. That’s a DRY violation, and in a financial system, DRY violations are correctness time bombs.

When identical intent is expressed in two places, the only question is when they diverge—not whether. Maybe a future developer adds currency conversion logic to one path but forgets the other. Maybe the next AI agent generates code from the service method as a reference and misses a change made to the job. Maybe you refactor the cashback module and update one caller but not both.

Each of those scenarios produces the same class of silent corruption. And no amount of test-writing prevents the pattern from recurring.

The Abstraction the AI Didn’t See

The right fix was to extract apply_purchase_confirmation()—a shared internal function that both callers delegate to.

The background job calls it. The admin service method calls it. The confirmation state transition now lives in exactly one place. Any future change to confirmation logic—a new side effect, a different ordering, a concurrency constraint—is made once and applies everywhere automatically.

The agent had the reference implementation in front of it. The structure of the problem made the pattern obvious. But it couldn’t see it, because recognizing when duplication warrants extraction is a design judgment, not a code generation task. It requires understanding what the system is doing, what could go wrong, and what the right shape of the solution is. That’s different from writing correct syntax.

Why Updating the Prompts Won’t Save You

After catching this, I thought if I should update my prompts for the AI—add an instruction like “always check for reference implementations before writing new code.”

But prompts are optimism, not guarantees. There is nothing in the fundamental nature of a large language model that ensures it will consistently honor an instruction buried in a system prompt. It might follow it on the next run. It might not on the one after. The model has no persistent memory, no architectural reasoning about your specific system, and no stake in the outcome.

You can add as many rules as you want to your prompt file. They do not prevent the model from generating plausible-but-wrong code on any given invocation. They lower the probability. They do not eliminate it. And in a financial system—or any system where correctness matters—“lower probability” is not a design strategy.

The real fix is structural: make the wrong thing hard to do. When confirmation logic lives in a shared function, there is no longer a “forget to copy the cashback call” class of bug. You either call apply_purchase_confirmation() or you don’t confirm the purchase.

The One Thing Automation Still Cannot Do

AI is genuinely powerful. Claude generates fast, applies patterns well, and handles boilerplate with ease. But it generates locally plausible code—code that looks right, compiles, and passes the tests you wrote. It doesn’t reason about the global shape of your system.

Spotting a missing abstraction requires you to look at two pieces of code, recognize that they share intent, understand what happens when they diverge, and decide that the right response is structure, not tests. That chain of reasoning draws on design experience, system knowledge, and an understanding of failure modes that a model doesn’t have.

That is what senior engineers do. Not just write correct code—design correct systems.

In the world of AI-assisted development, that judgment is not a nice-to-have. It is the thing that separates a demo from a production system, a shipped feature from a corruption incident, a green pipeline from a trust problem you can never fully repair.

The agent made a mistake. I caught it, and fixed the right thing. The system is now structured so that mistake cannot happen again—not because of a rule in a prompt file, but because the code itself enforces the constraint.

That’s the work that still requires a human.